Hello World VR Application with the Oculus Quest 2 and Unity

Learning a new framework or toolset is something I try to do every year. Over the last few years, I've explored a broad range - from Tensorflow, React, Tailwindcss, Carbon Design System, Gatbsy to FastApi, ElasticSearch etc; all beginning with the trusty Hello World approach. I have always wanted to get more experience with 3D modeling using tools like Blender/Maya and 3D game engines like Unity and Unreal. Making virtual reality (VR) apps is something that covers these two areas.

I had 3 days (mostly evenings) to explore, here is what I came up with.

In this post, I won't share much actual code beyond describing what I have done. This is delibrate as the Oculus Quest api appears to be changing rather rapidly; chances are that any code I show here will be deprecated in the near future. Instead, I'll point to official documentation where possible.

Why VR?

As a researcher in the HCI and Applied ML space, virtual reality continues to be an increasingly relevant topic. VR is used extensively to simulate the real world with applications in reinforcement learning (see AirSim from Microsoft), robotics, and other fields. Advances in software and hardware solutions also make VR participation democratized, leading to potentially more consumer usage.

And then, there are increasing conversations on the MetaVerse (see announcements by Facebook 1 and Microsoft 2 ) and how it can help make collaboration more personal and fun2. There are also many interesting HCI implications of VR in terms of engagement, learning, task performance, stress/fatigue and even addiction (see the journal of virtual world research 1 as a potential starting point). Interestingly, some research4 I conducted a while showed that virtual worlds help users acquire complex tacit knowledge and are perhaps amenable to learning tasks where the subject is complex/tacit knowledge.

Overall, all of these dimensions make VR an interesting area to explore.

Why the Oculus Quest 2?

While there are a few VR headsets, the Oculus Quest 2 appears to have the best experience (untethered, inside out tracking), excellent resolution (1,920 by 1,832 per eye), great performance all while being the cheapest. For comparison, other comparable headsets are tethered and cost significantly more as at time of writing - HTC Vive Cosmos Elite Virtual Reality System ($899), HTC Vive Pro Eye Virtual Reality System ($1,355), HTC Vive Pro Virtual Reality System (\$1,199), HTC Vive Focus 3 (Standalone, 1,300).

Learn more on VR headsets here.

Why Unity3D?

You can develop for the Oculus Quest 2 using multiple platforms (e.g., Unity, Unreal, Native development or web). I chose Unity3D for several reasons:

- Multiplatform ecosystem - Games developed in Unity can be built/compiled for multiple target platforms - webgl, android, ios, Oculus etc.

- Mature community and resources - The Unity3D ecosystem is mature with a wide array of existing assets, prefabs etc that can be reused.

- Excellent learning resources - Unity3D has a great community of developers and great documentation.

Developing for the Oculus Quest 2 - Hello World!

My process for getting to the example in the demo above can be broken down into a few steps. Links to relevant documentation are provided. I used my old trusty 2014 Macbook Pro for this project, but everything should work (even better) on a windows machine. Note that the Oculus Quest runs Android, so you will see multiple references to Android across the documentation.

Oculus Setup: The Oculus pretty much sets up itself and shows you how to pair with a mobile device. You will need to put it in "developer mode" (documentation here) and setup your developer account.

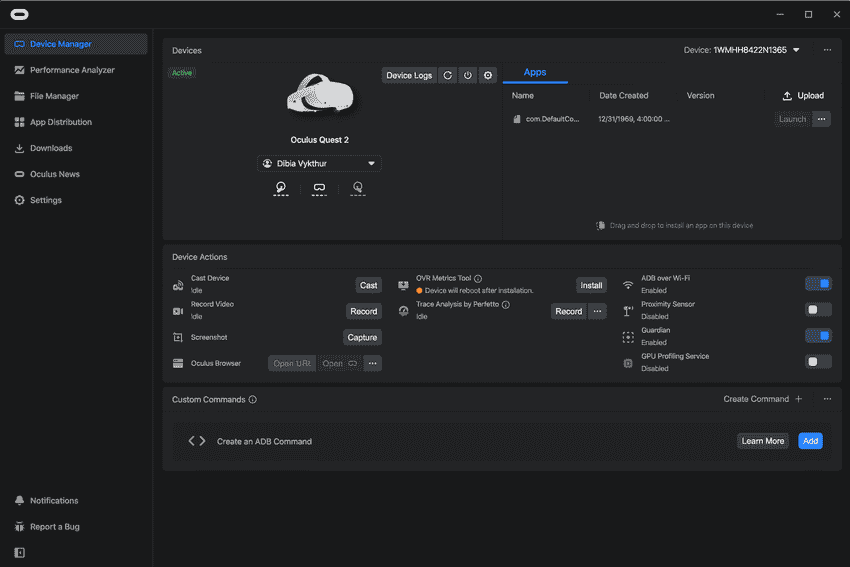

Unity3D Setup: Install Unity3D with Android Build Support (documentation here). Also install the Oculus Developer Hub (ODH) (documentation here) . I found ODH to be a useful visual interface to inspect that the Oculus was connected and to enable Wi-Fi debugging of apps.

Getting Familiar with Unity3D - Creating a scene, adding interaction using controls, building and testing on the Oculus Quest 2.

Given that I had zero experience with unity, I went through the unity essentials guided tutorial to get familiar with the basics. Completing this tutorial provides guidance on how use the unity editor, introduces concepts such as game managers, scenes, game manages, game objects, assets, packages etc.

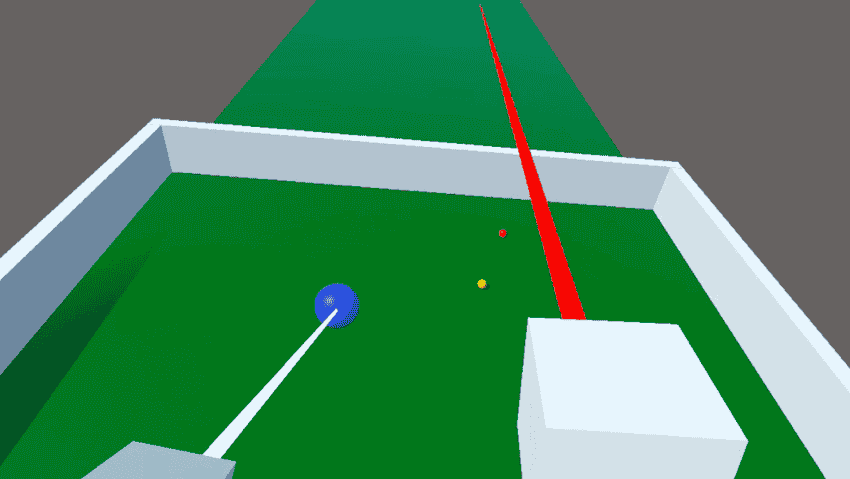

I followed this hands on tutorial from Oculus to build out parts of the small scene in the demo (documentation here) . This tutorial was useful in gaining hands on experience building things from scratch e.g., creating walls using 3D cube objects, balls using 3D sphere game objects, a floor using a 3D plane game object, adding color, adding scripts to modify behaviour. It also provides guidance on how to build and deploy to the Oculus Quest 2 device. With the Oculus Link, the process of live debugging is much faster (can be simulated in the Unity editor); however, the Link is currently only supported on a windows PC.

I ran into errors using the Oculus OVR plugin for mapping controls (documentation here) and then switched to the more generic Unity XR Interaction Toolkit with better success. I found this YouTube video on the Unity XR Interaction Toolkit helpful (video here) . It provided insights on how to setup XR Interaction Toolkit, use the XR camera rig (which automatically maps to the headset view) and map controllers to the XR camera rig.

Next Steps

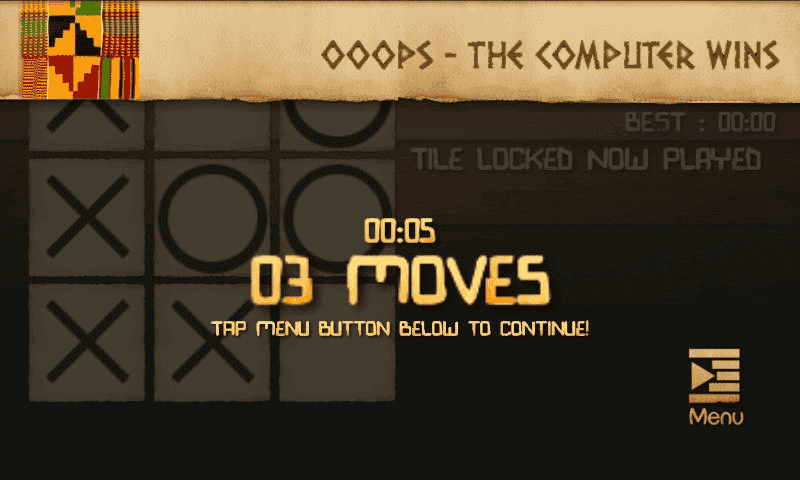

A specific task I'd like to accomplish with VR is to replicate a 3D,interactive version of Gidigames which I built about 10 years ago. Gidigames is a collection of short games (slider puzzles, language learning slasher style games, knots and crosses) focused on African languages and culture.

To achieve that, there is still a tonne of work to be done! Some are listed below:

- Blender !! Learn to create assets using a 3D modeling tool like Blender. Already found some great (short and sweet)tutorials by Stefan Persson

- Design/storyboard a 3D version for Gidigames

- Build additional expertise with Unity3D - enabling 2D/3D navigation, creating UI panels, switching between multiple game scenes etc.

- Incrementally stitch it all together! Import artifacts created in blender, Benefit of using a game engine like Unity3D is that with some effort you can build a game that is playable on multiple platforms (think Android, iOS, Web, PC, Mac Oculus Releases!).

Yikes! There is quite a bit to be done! Will I find time to do it all? Maybe. Like I mentioned in my 2021 reflection, the goal is to try!

If you are exploring VR (dev work or gaming) or any new topic, reach out on twitter!

1\3 Over the break, I explored developing for Oculus Quest + Unity3D.

— Victor Dibia (@vykthur)January 4, 2022

- 3D environment (plane, balls, walls, text)

- Added simple grab interactions using controllers (pick and drop balls)

- Joystick based navigation of the environment.

My thoughts 🧵https://t.co/DyY4MWbdKLpic.twitter.com/LBJBwg4rBR

References

- Facebook’s CEO on why the social network is becoming ‘a metaverse company’ https://www.theverge.com/22588022/mark-zuckerberg-facebook-ceo-metaverse-interview↩

- Mesh for Microsoft Teams aims to make collaboration in the ‘metaverse’ personal and fun https://news.microsoft.com/innovation-stories/mesh-for-microsoft-teams/↩

- Wagner, Christian, and Victor Dibia. "EXPLORING THE EFFECTIVENESS OF ONLINE ROLE-PLAY GAMING IN THE ACQUISITION OF COMPLEX AND TACIT KNOWLEDGE." Issues in Information Systems 14.2 (2013).↩

Whats New in VR 2022? Inside Out Tracking, Passthrough Rendering, Realtime Handtracking

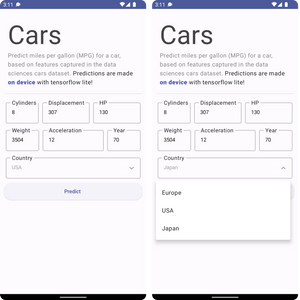

Whats New in VR 2022? Inside Out Tracking, Passthrough Rendering, Realtime Handtracking How to Build An Android App and Integrate Tensorflow ML Models

How to Build An Android App and Integrate Tensorflow ML Models Top 10 Machine Learning and Design Insights from Google IO 2021

Top 10 Machine Learning and Design Insights from Google IO 2021 2019 Year in Review

2019 Year in Review How to Render Blender 3D Models in React with React Three Fiber

How to Render Blender 3D Models in React with React Three Fiber Introducing Peacasso: A UI Interface for Generating AI Art with Latent Diffusion Models (Stable Diffusion)

Introducing Peacasso: A UI Interface for Generating AI Art with Latent Diffusion Models (Stable Diffusion) 10 Predictions on the Future of Cloud Computing by 2025 - Insights from Google Next Conference

10 Predictions on the Future of Cloud Computing by 2025 - Insights from Google Next Conference